You've just used ChatGPT, but do you know where your data goes?

The journey your information takes when using AI

There a lot of people who do not know where their data goes when using an AI chatbot.

They will open up ChatGPT or Claude, type in their prompt, maybe attach some files, and watch this seemingly magical artificial entity generate a response that at least aligns with, but preferably exceeds, their expectations.

But in the midst of being so mesmerised, many will not bother to think what really happens inside that black box.

Not many people will really think about what happens to their data once they submit it to the machine.

Some people may say: ‘who cares?‘

And maybe this is a reasonable response. Why care about the journey your information takes when it enters the complex bowels of a language model and gets transformed into new output rendered token-by-token to gradually build a response to your query? Surely all that matters here are the ends, not the means.

But the mistake with this mindset, if you have it, is that you are making some quite stark assumptions about those means.

You are assuming that the use of your data is within the realm of your expectations.

You are assuming that the use of your data is limited to simply running inference on the model to get the answer it thinks you need.

You are assuming that the the use of your data is sufficiently proper and ethical.

But what if these assumptions are wrong?

What if your data is being used for purposes that you did not expect and might even object to?

What if your data is being shared with other entities you did not even know were involved?

What if your data is out of your control?

Here is the painful truth when it comes to navigating our digital word: every time you use a digital service, you give up some data. And when you surrender your data to these digital systems, your agency over it automatically diminishes.

Moreover, you are giving your data up to systems built and controlled by others with incentives and priorities that may not be conducive to yours.

And control over your data also means control over your digital experience. Sometimes that experience may be absolutely fine, but the point is that this is not determined by you. It is determined by other people.

A few weeks ago, OpenAI announced that it would start displaying ads on ChatGPT.

If you have been reading my content over the past few months, you would see how I have talked about such a decision becoming a reality. Investments in AI have been enormous, fuelled by the dramatic promises of generative AI. Companies like OpenAI, with Altman as the prime advocator, have been constantly glamourising their models as magical technologies that would solve so many of humanities great problems and become beacons of growth and progress.

But in the end, when the hype fades away, OpenAI and others were always going to need to demonstrate to their investors how they were going to get returns on their investments. AI developers needed to show how they were actually going to make money with their products.

In the end, OpenAI has chosen a path that has worked very well for the tech companies before it. Google, Facebook and others turned to surveillance capitalism to strengthen their business models, involving the construction of data extraction systems that turned behavioural insights of users into revenue via targeted advertising. The cycle has simply repeated itself.

If OpenAI is doing this, then the question of what actually happens to your data when you use ChatGPT and other tools like it really does become important.

The first step to solving any problem is to understand the problem really well. And to help with this, in this post I uncover the system that lies behind the chatbots that you are probably well-familiar with or at least heard of.

I set out the different components that make up the system, how they fit and work together, and how your data moves through the system when you use it. I also look at the data risks involved and some simple steps you can take to better-protect yourself.

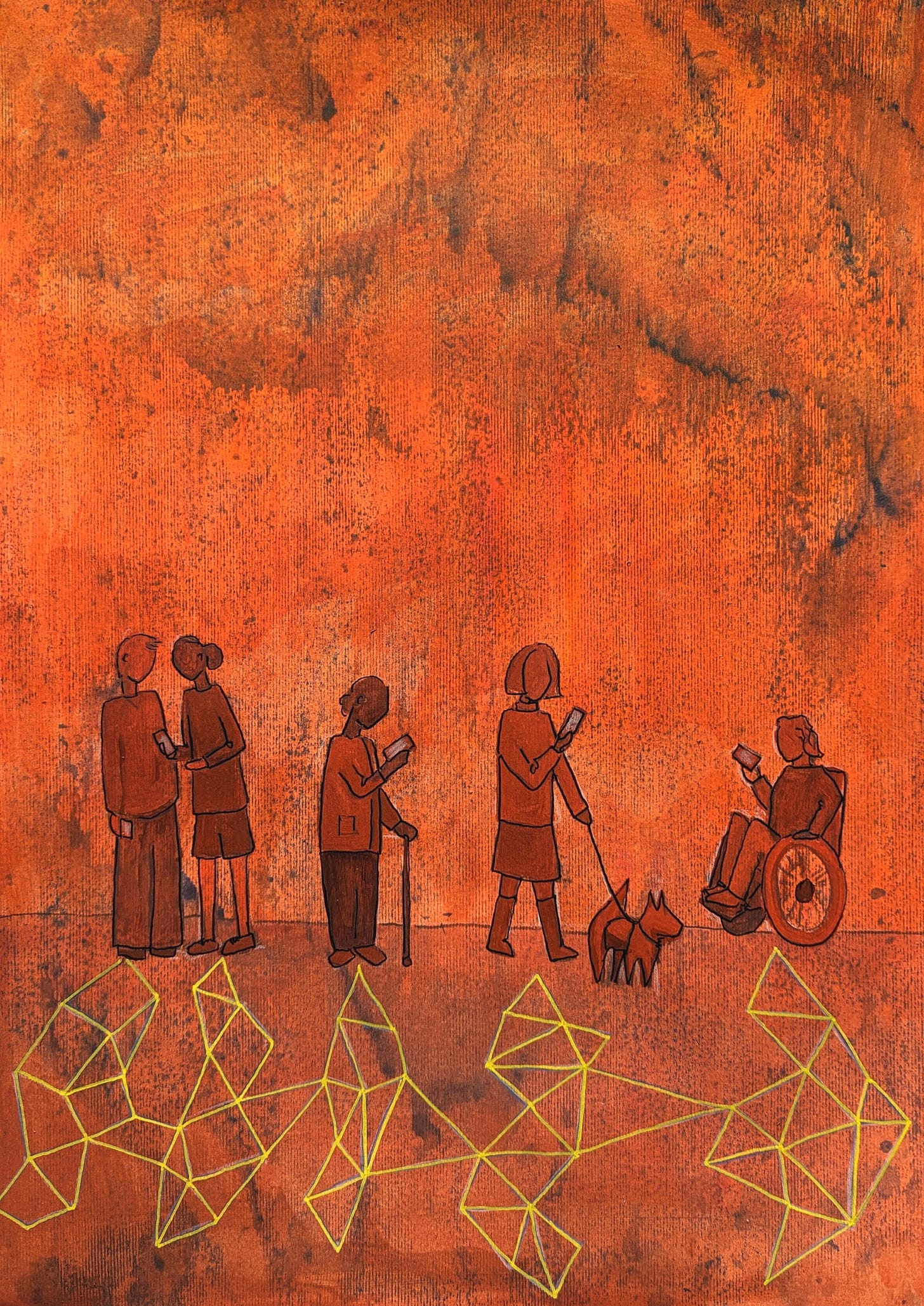

This will be relevant to everyone who uses an AI chatbot. It does not matter if you are using it for throw-away personal questions or if you are part of the ‘shadow AI’ clique at work. You need to know what happens to your data when you use a chatbot.

If you understand the systems you are engaging with, you put yourself in a better position to mitigate the risks. You cannot fully solve a problem unless you really understand the problem.

Make sure to share this newsletter with others if you find it valuable. And subscribe if you want more insights like this in your inbox every week.